Form Recognizer - Cognitive Service and integration in Office 365

In Azure, Microsoft already offers a variety of cognitive services such as Voice and Face Recognition for language, face, emotion, age and more recognitions. With the Cognitive Service Form Recognizer, Microsoft has extended it’s portfolio and offers the possibility to accelerate existing business processes through automation.

Table of Contents

Microsoft Form Recognizer allows the automated extraction of information from documents by machine learning trained models. Whether PDF or JPEG, keys and values can be analyzed and processed. This eliminates the need for manual labeling and evaluation. Simply upload one or more documents to train the model and the service will automatically apply machine learning for future recognition. The Form Recognizer service is currently in preview and can be activated on the Azure portal website.

Preparation and Service Creation

First, to analyze our own forms and to extract data from them, it is necessary to train the AI on the user-defined forms. There are a few prerequisites for this on the Form Recognition service. To train the service, five filled out (with different values) or two filled out and one empty form are required.

There are still some further requirements such as resolution, language etc.. You can find them on the Microsoft Docs page of the Form Recognizer service.

The next step is the preparation and training of the service. The complete configuration and future consumption of the service is currently only possible via API. There is no GUI for this service, but a very good and comprehensive API documentation. To provide the five sample forms, you can use various upload options. I decided to use a relatively simple option using an Azure Storage Account (Blob Storage) and Microsoft Forms Recognizer Website Console.

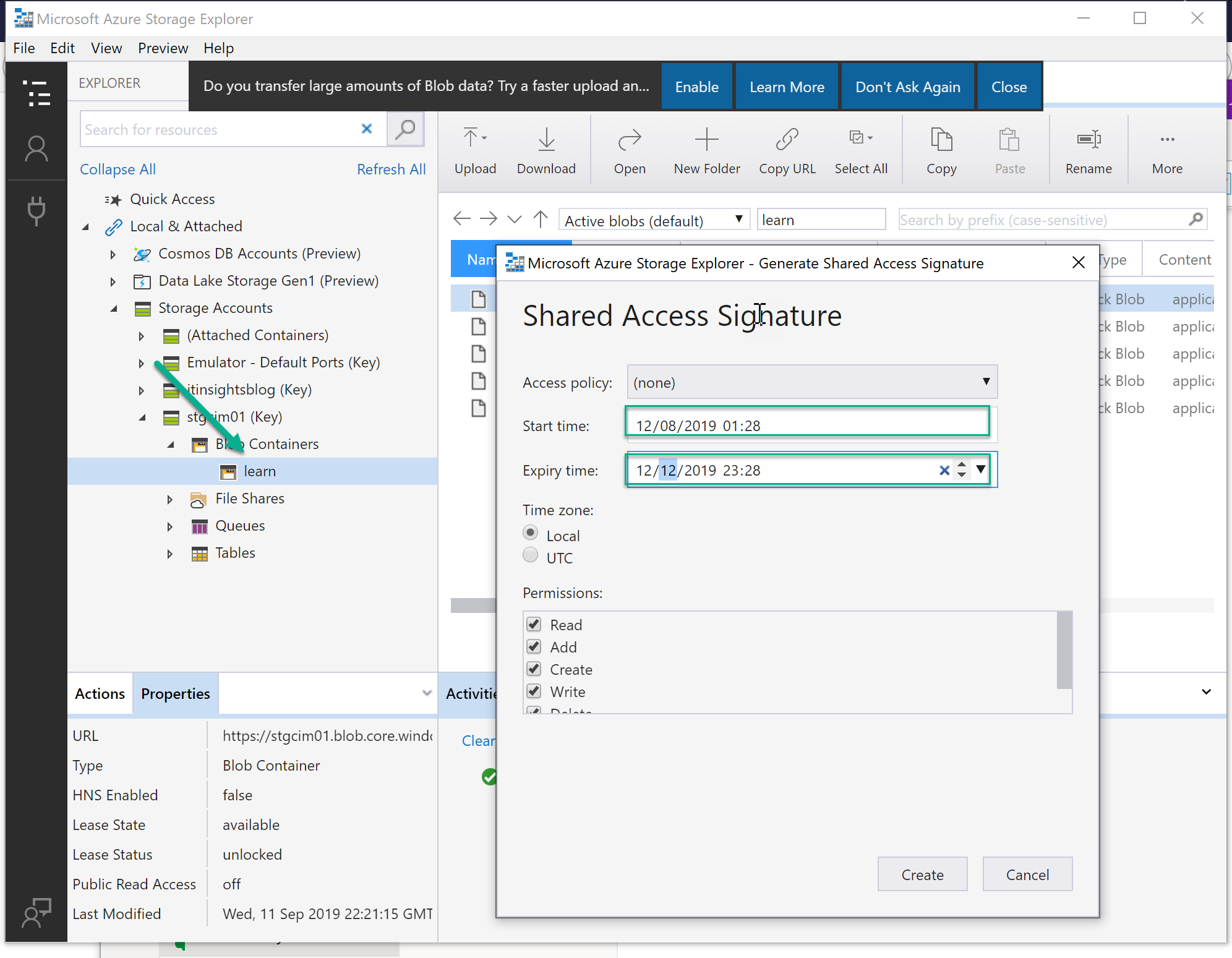

Therefore we have to create a storage account with a blob container named “learn”. To use this container a Shared Access Signature (SAS) URL is required. A SAS URL must be generated for this purpose. This can be done by adding the Storage Accounts to the Storage Explorer and use it’s SAS generation functionality. Please observe the correct date in ragards to your current time zone when generating the SAS URL for the “learn” container. Then upload the 5 sample forms into the created blog container “learn”.

I use the Microsoft example data set for provisioning and testing. It consists of five invoice forms.

The next step is to create the Cognitive Service “Form Recognizer” in the Azure Portal. This can easily be done in the Azure Portal. Create a new Form Recognizer (Preview) Serive and fill in the required fields as shown in the graphic.

Wait until the deployment is complete and open the created Form Recognizer to note the endpoint you see in the overview and the key (Ocp-Apim-Subscription-Key) as it is needed for the next steps.

Train AI

Now let’s get to the training of the service with the user-defined forms. For this we use the Form Recognizer Web Console.

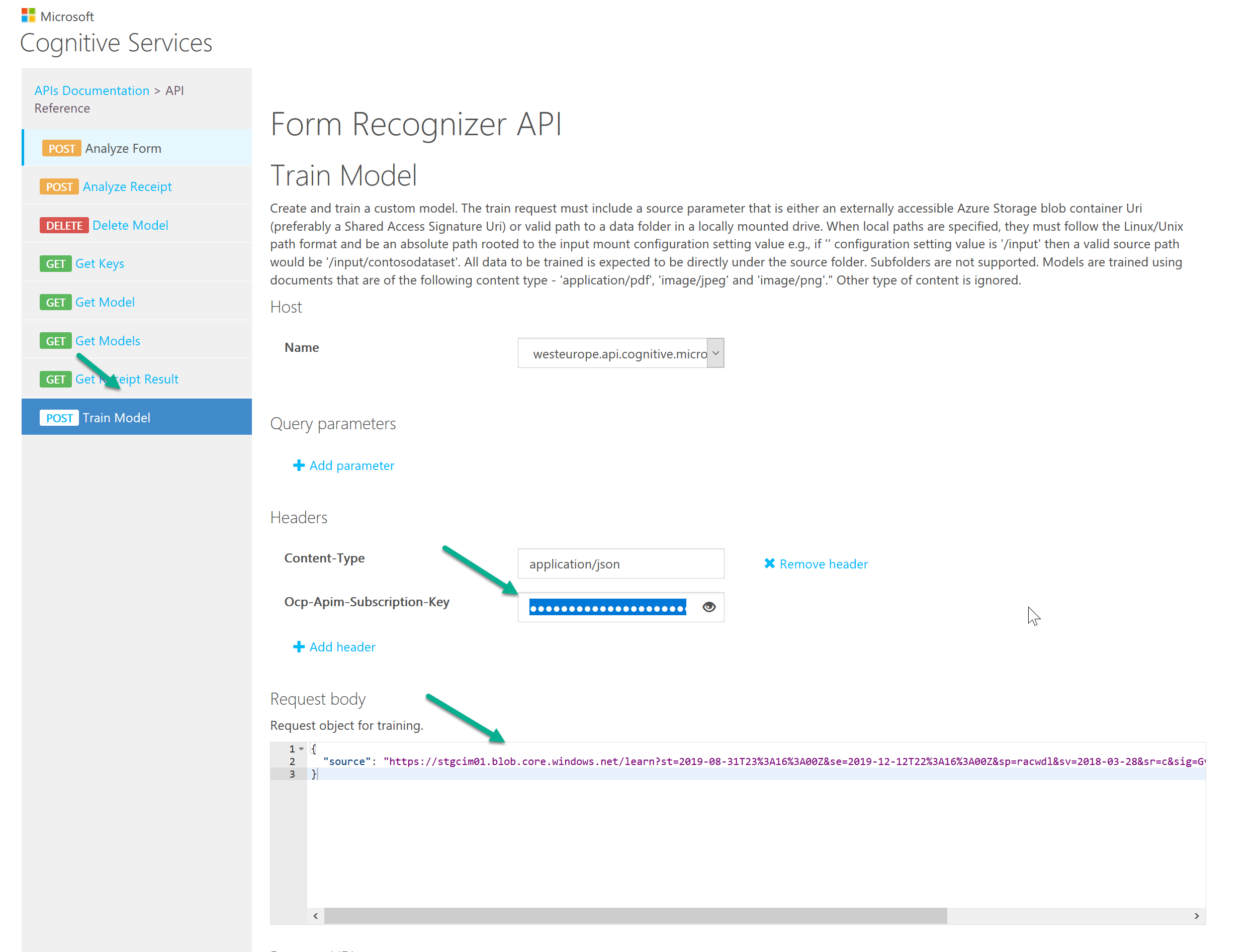

Select “Train Model” and your location (WestEurope) from the navigation bar on the left.

In the Ocp-Apim-Subscription-Key field, enter the access key from your created Form Recognizer. In the Request Body area, you define the Azure Storage Account Blog Container from which the sample forms are to be loaded. Enter your SAS URL as shown in the example below:

1 | { |

Then execute the API call. This should return a successful import of the sample forms and a ID. The “modelID” is the form recognition generated by machine learning based on the uploaded user-defined example forms. A unique ID is generated for each new training session with the example forms. Make a note of the ID, as it is required for the analysis of the forms.

Analyze Form

Now we will come to the form analysis. The form recognition has been learned in the previous steps and can now be used. As already described at the beginning, the Form Recognizer Service can only be used via API, for this purpose we use Microsoft Flow to extract the data from the forms and process it further.

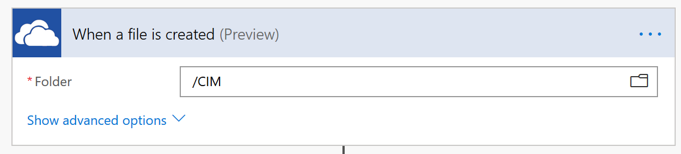

In this case, I use OneDrive for Business to provide data for analysis. This way we can store a file in a defined OneDrive folder, which is then transferred to the Form Recognizer API and processed.

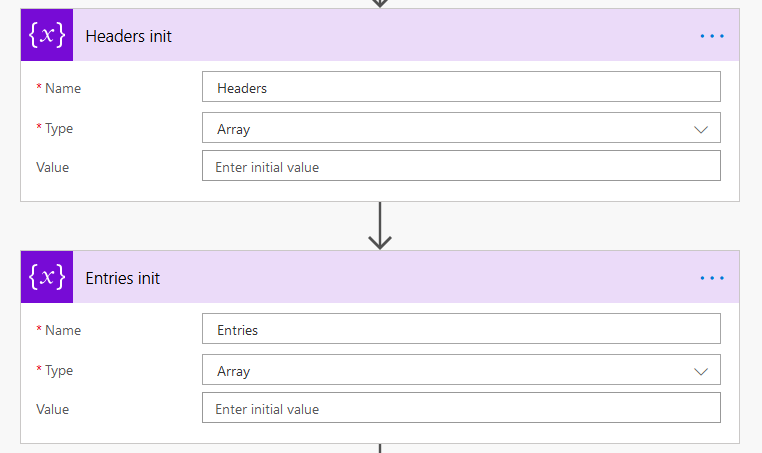

In the next step we first define the variables that are needed for the extraction and transfer. Because the example data used by me contains a table, I created the required variables. It depends of course on the database which and many variables you need to process your information.

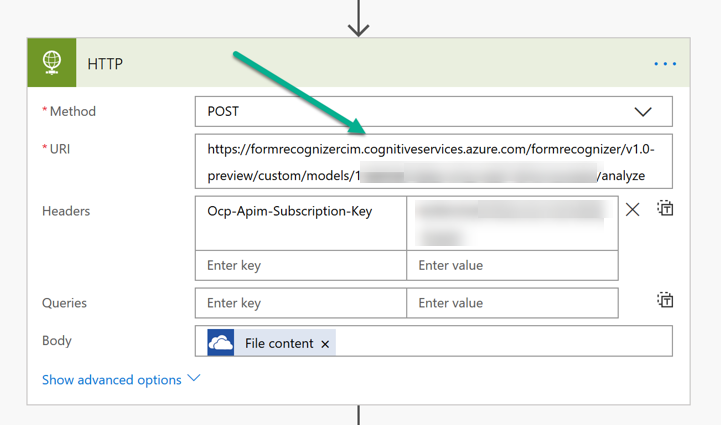

Then we come to the integration of the Form Recognizer service into the flow. The data from OneDrive is transferred to the service via HTTP. We create the HTTP action and select the method “Post” and configure the URL based on our endpoint including the generated model ID.

1 | { |

Furthermore we enter our Form Recognizer Service Key and in the Body we select OneDrive for Business “File Content”. Setting the Content-Type value is not required in Microsoft Flow.

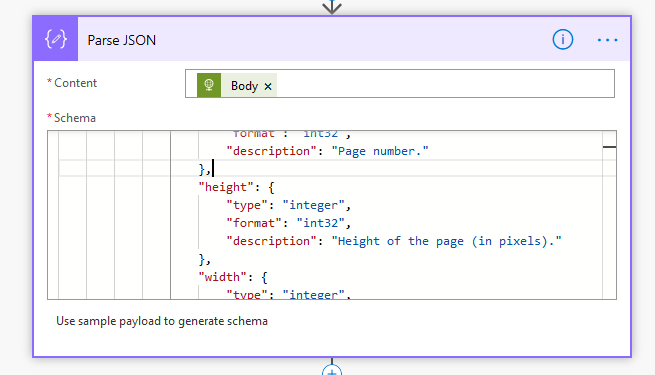

After the successful creation of the web action we use the body of the http actions in a parse JSON action as content.

We also needed the schema which is as follows and can also be found at Microsoft within the API description.

form-recognizer-schema.json

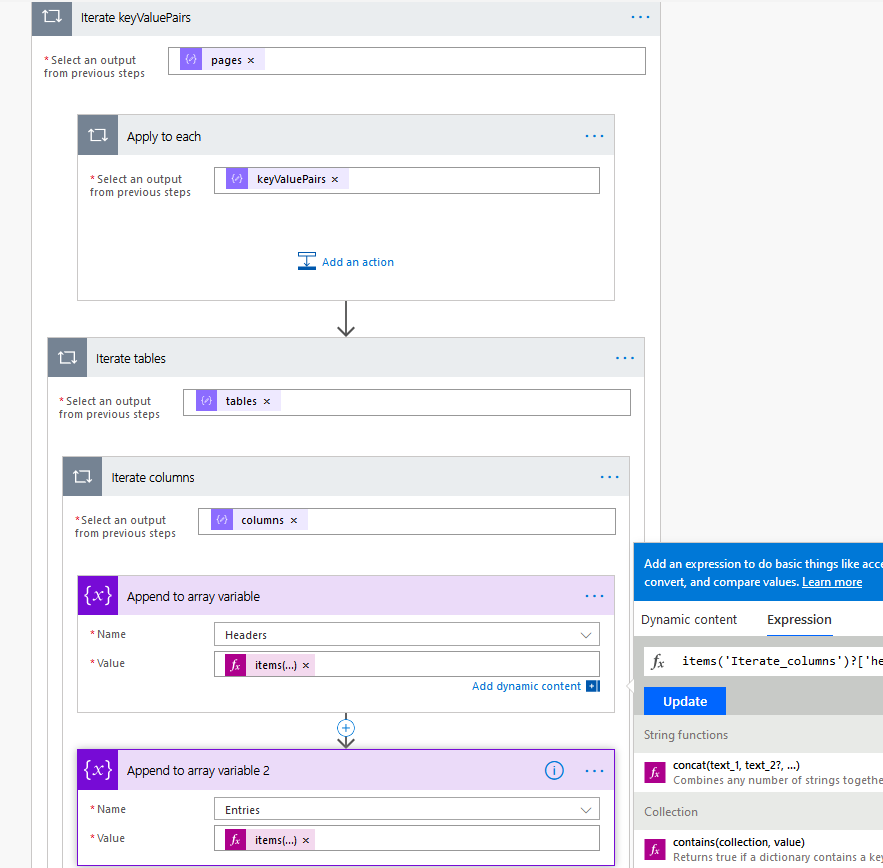

To analyze the required information, it must be extracted from the previous JSON output using “Apply to each” action. To do this, the output is iterated through and the headers and values of the table are passed to the corresponding variables defined in previous steps.

The Headers variable is set with the following expression:

1 | items('Iterate_columns')?['header'][0]['text'] |

The Entries variable is set with the following expression:

1 | items('Iterate_columns')?['entries'][0][0]['text'] |

The information that is analyzed by the Form Recognizer Service when reading the PDF / JPG / PNG is now available for further processing and transfer, for example to a SharePoint list or Dynamics or all other Flow connectors.